Today’s AI landscape is defined by the ways in which neural networks are unlike human brains. A toddler learns how to communicate effectively with only a thousand calories a day and regular conversation; meanwhile, tech companies are reopening nuclear power plants, polluting marginalized communities, and pirating terabytes of books in order to train and run their LLMs.

But neural networks are, after all, neural—they’re inspired by brains. Despite their vastly different appetites for energy and data, large language models and human brains do share a good deal in common. They’re both made up of millions of subcomponents: biological neurons in the case of the brain, simulated “neurons” in the case of networks. They’re the only two things on Earth that can fluently and flexibly produce language. And scientists barely understand how either of them works.

I can testify to those similarities: I came to journalism, and to AI, by way of six years of neuroscience graduate school. It’s a common view among neuroscientists that building brainlike neural networks is one of the most promising paths for the field, and that attitude has started to spread to psychology. Last week, the prestigious journal Nature published a pair of studies showcasing the use of neural networks for predicting how humans and other animals behave in psychological experiments. Both studies propose that these trained networks could help scientists advance their understanding of the human mind. But predicting a behavior and explaining how it came about are two very different things.

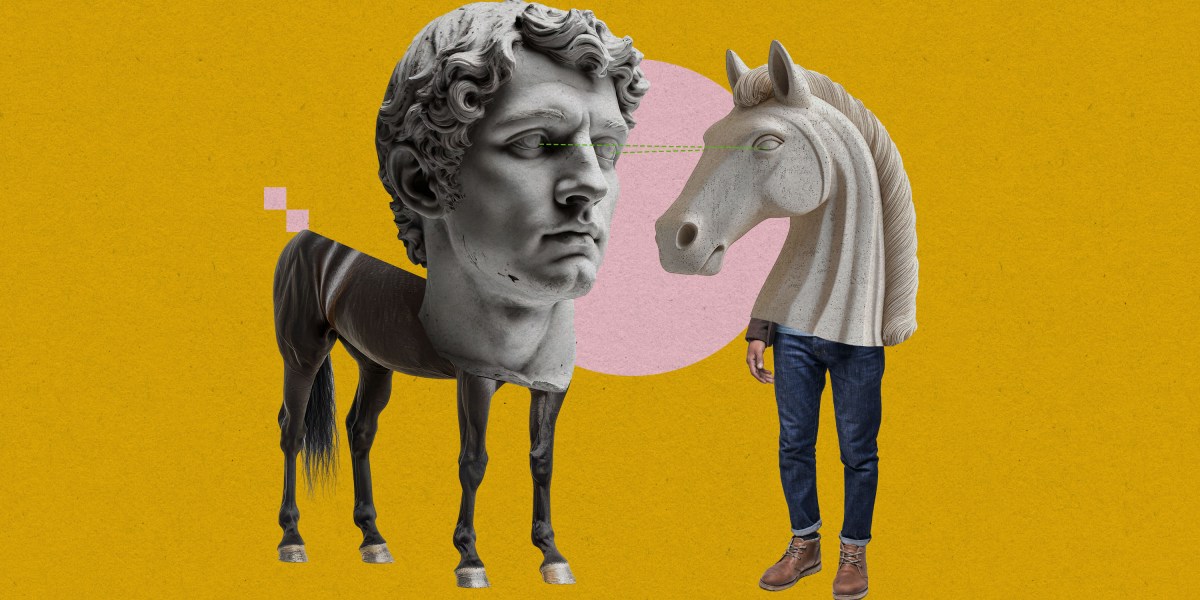

In one of the studies, researchers transformed a large language model into what they refer to as a “foundation model of human cognition.” Out of the box, large language models aren’t great at mimicking human behavior—they behave logically in settings where humans abandon reason, such as casinos. So the researchers fine-tuned Llama 3.1, one of Meta’s open-source LLMs, on data from a range of 160 psychology experiments, which involved tasks like choosing from a set of “slot machines” to get the maximum payout or remembering sequences of letters. They called the resulting model Centaur.

Compared with conventional psychological models, which use simple math equations, Centaur did a far better job of predicting behavior. Accurate predictions of how humans respond in psychology experiments are valuable in and of themselves: For example, scientists could use Centaur to pilot their experiments on a computer before recruiting, and paying, human participants. In their paper, however, the researchers propose that Centaur could be more than just a prediction machine. By interrogating the mechanisms that allow Centaur to effectively replicate human behavior, they argue, scientists could develop new theories about the inner workings of the mind.

But some psychologists doubt whether Centaur can tell us much about the mind at all. Sure, it’s better than conventional psychological models at predicting how humans behave—but it also has a billion times more parameters. And just because a model behaves like a human on the outside doesn’t mean that it functions like one on the inside. Olivia Guest, an assistant professor of computational cognitive science at Radboud University in the Netherlands, compares Centaur to a calculator, which can effectively predict the response a math whiz will give when asked to add two numbers. “I don’t know what you would learn about human addition by studying a calculator,” she says.

Even if Centaur does capture something important about human psychology, scientists may struggle to extract any insight from the model’s millions of neurons. Though AI researchers are working hard to figure out how large language models work, they’ve barely managed to crack open the black box. Understanding an enormous neural-network model of the human mind may not prove much easier than understanding the thing itself.

One alternative approach is to go small. The second of the two Nature studies focuses on minuscule neural networks—some containing only a single neuron—that nevertheless can predict behavior in mice, rats, monkeys, and even humans. Because the networks are so small, it’s possible to track the activity of each individual neuron and use that data to figure out how the network is producing its behavioral predictions. And while there’s no guarantee that these models function like the brains they were trained to mimic, they can, at the very least, generate testable hypotheses about human and animal cognition.

There’s a cost to comprehensibility. Unlike Centaur, which was trained to mimic human behavior in dozens of different tasks, each tiny network can only predict behavior in one specific task. One network, for example, is specialized for making predictions about how people choose among different slot machines. “If the behavior is really complex, you need a large network,” says Marcelo Mattar, an assistant professor of psychology and neural science at New York University who led the tiny-network study and also contributed to Centaur. “The compromise, of course, is that now understanding it is very, very difficult.”

This trade-off between prediction and understanding is a key feature of neural-network-driven science. (I also happen to be writing a book about it.) Studies like Mattar’s are making some progress toward closing that gap—as tiny as his networks are, they can predict behavior more accurately than traditional psychological models. So is the research into LLM interpretability happening at places like Anthropic. For now, however, our understanding of complex systems—from humans to climate systems to proteins—is lagging farther and farther behind our ability to make predictions about them.

This story originally appeared in The Algorithm, our weekly newsletter on AI. To get stories like this in your inbox first, sign up here.